From Reaction to Responsibility in Data and AI Systems

Ethical AI is often framed as a technical challenge—bias mitigation, security controls, performance safeguards.

But the most consequential failures in data, AI, and public-sector systems are rarely technical alone.

They are relational.

They emerge when authority is unclear, responsibilities are defined only after harm occurs, and the people most affected by systems are absent from defining how those systems operate in the first place.

This page reflects my approach to Ethical AI as a governance and stewardship practice, grounded in responsibility, consent, and long-term accountability—not just technical compliance.

Technical Failure vs. Relational Failure

Many data breaches, AI harms, and governance breakdowns are described as technical failures.

What they more often reveal is something deeper:

- Unclear or contested authority

- Misaligned incentives and interests

- Responsibility assigned after damage has already occurred

Relational failure occurs when systems are built about people rather than with them—when voice, consent, and accountability are treated as secondary concerns instead of foundational design elements.

Ethical AI begins by addressing this distinction directly.

Indigenous & Community Stewardship as a Systems Lens

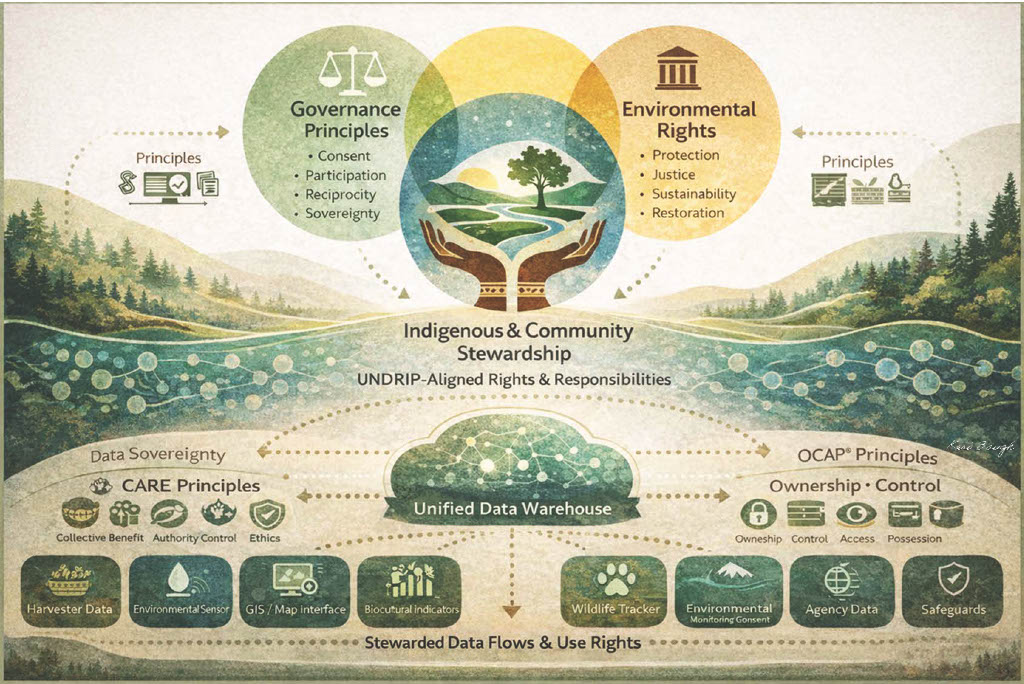

The framework illustrated above centers Indigenous and Community Stewardship, grounded in UNDRIP-aligned rights and responsibilities. This is not symbolic—it is structural.

At the center:

- Stewardship, not extraction

- Responsibility defined upfront, not retroactively

- Communities recognized as authorities, not just stakeholders

Surrounding this core are governance principles such as:

- Consent

- Participation

- Reciprocity

- Sovereignty

Alongside commitments to:

- Environmental protection

- Justice

- Sustainability

- Restoration

Beneath it all, data flows are stewarded, guided by CARE and OCAP® principles, with clear use rights, safeguards, and accountability mechanisms.

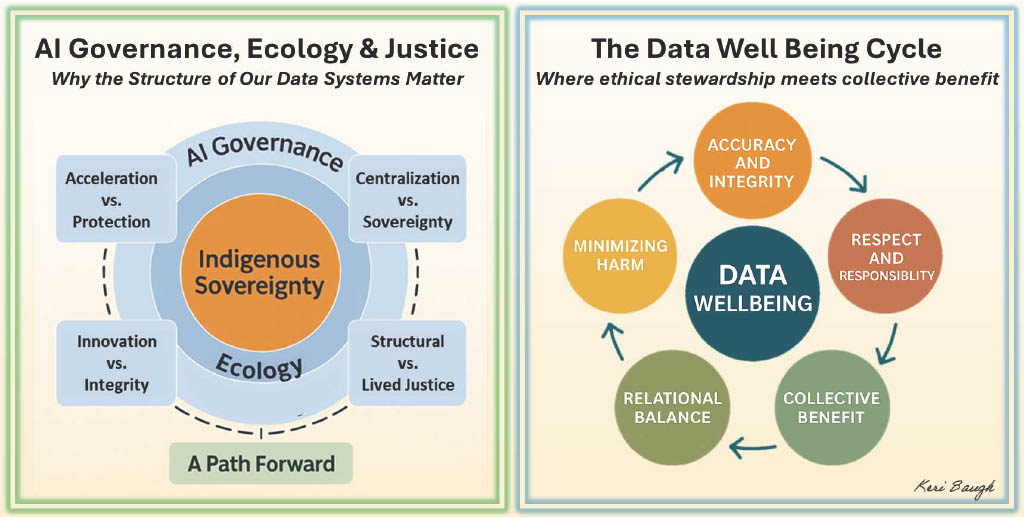

Ethical AI as Relational Design

This is not simply a better technical model.

It is a relational one.

When consent, care, and responsibility are designed in before deployment—rather than patched on after harm—systems behave differently over time:

- They earn trust rather than demand it

- They sustain continuity under pressure

- They serve collective benefit rather than narrow optimization

Ethical AI, in this sense, is not a constraint on innovation.

It is a condition for durability.

How This Shapes My Data Science Practice

Within my work, Ethical AI shows up as:

- Governance-aware model design and monitoring

- Attention to authority, accountability, and use rights—not just outputs

- Alignment with Indigenous data governance principles where relevant

- Evaluation of systems under stress, not just at deployment

- Treating disruption as diagnostic information rather than failure

The goal is not perfection, but integrity that persists as conditions change.

Designing Responsibility Upfront

The shift from reaction to responsibility is the real work of Ethical AI.

When systems are designed with clear authority, shared responsibility, and relational accountability from the start, harm becomes less likely—and when it does occur, it is addressed within a structure that already knows who is responsible, to whom, and why.

That is what it means to design integrity into AI systems—not as an afterthought, but as a foundation.